ECE3340 - HW 4A - Analysis of excerpts from the class

your name, ID

You must answer in the narrative style and in full sentences (good grammar and prose are a plus). Show your thinking and reasoning in your discussion or explanation. Do not just show a number or a graph as your answer. Your work is treated with respect like any technical/scientific article, where figures, tables are used to support the discussion, not the essence. Hence, your discussion is the essence.

Instructor’s comments

are in italic and highlighted in light red.

There are a number in the class who did honest, excellent work

with very high scores. It worthwhile for you to review and see

what classmates did and understand what is expected of this

course.

1A. (40 pts) Choose either 1A (this) or 1B below.

Separate two musical sounds that incidentally overlapped in time.

Listen to the below:

It was a concerto mistake: the second instrument started 1/2-note too soon (~0.5 sec) before the first instrument stopped. You will "sound-shop" (like "photoshop") such that the second sound starts 1/2-note later, immediately after the first sound stops.

See Fourier Tutorial for explanation.

Instructor’s

comments. Although the entire answer was already posted

in this blog, (and

a few unabashedly copied and pasted into HW), one doesnot learn

anything until going through the steps and gain insight. The work

below is selected because the

author described one’s understanding along the way.

If you wonder why some gets so

high score in the class and why the HW asks such “stupid things”

as the below:

You must answer in the narrative style ... Show your thinking and reasoning in your discussion or explanation. Do not just show a number or a graph as your answer.

then read through various examples to know why.

Author’s Answer - bold and highlighted are by Instructor

Instructor’s

comments. Excellent work. This same problem has been

given in many years, and this is the best ever that Intructor has

ever seen because of two things:

1- the dedication of the work in using far more filter bands for

both sounds than all previous

2- the clarity in explanation of the approach, although previous

work also had excellent explanation, this work has slightly more

details such that someone who is

totally unfamiliar with this course can completely understand

what the author did.

If you wish to know what “Show your thinking and reasoning in your discussion or

explanation” is like, read examples in this file and

below is one.

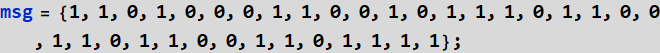

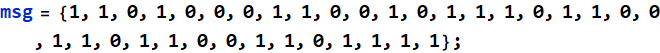

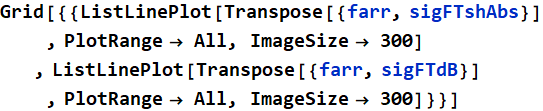

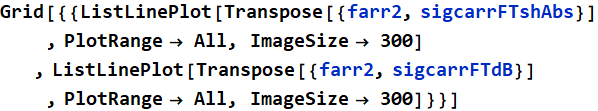

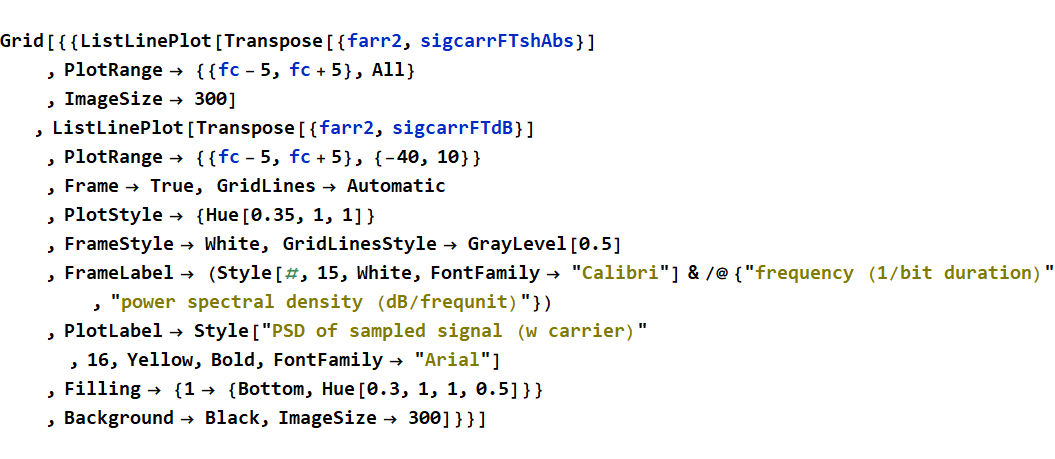

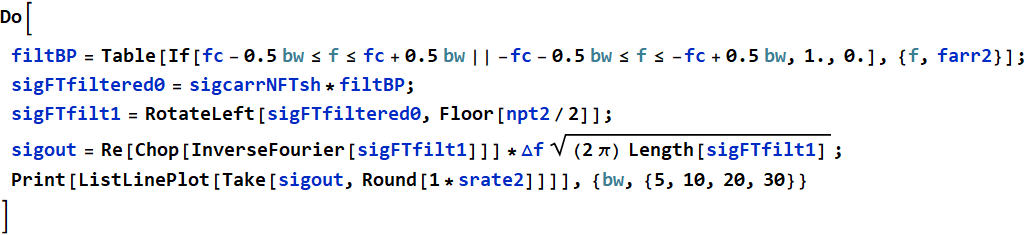

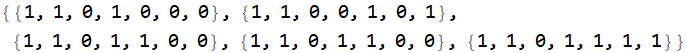

We would like to define an audiodataGet function to help us acquire the audio data of the sample and also its sampling rate. The function would pass two argument to object {x,srate1} so that we could later use in the PSD analysis App.

In[265]:=

![Graphics: 19483 19483 6 19483 19483 6 19483 293

PlotLabel /. Options[{audiodataGet[xaudio_] :=

{AudioData[#][[1]], #[[1, 2]]} & @ xaudio ;, ,

RowBox[{RowBox[{{x, srate1}, =, RowBox[{audiodataGet, [,

InterpretationBox[GraphicsBox[{{GrayLevel[0.9], RectangleBox[{0,

0}, {360, -164}]},

{InsetBox[GraphicsBox[InsetBox[GraphicsBox[RasterBox[{{0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.450434,

0.441146, 0.430905, 0.436235, 0.415675, 0.422205, 0.397473,

0.392151, 0.376597, 0.366427, 0.368128, 0.372967, 0.372593,

0.353326, 0.352644, 0.341321, 0.336053, 0.320796, 0.327306,

0.288979, 0.308864, 0.28104, 0.286381, 0.262804, 0.275725,

0.259161, 0.215479, 0.244009, 0.219167, 0.219986, 0.164715,

0.213558, 0.178171, 0.140195, 0.170384, 0.12916, 0.150066,

0.164757, 0.189708, 0.140224, 0.247229, 0.266543, 0.260418,

0.221265, 0.239668, 0.28554, 0.20785, 0.245946, 0.22701,

0.270067, 0.187391, 0.272877, 0.227781, 0.248532, 0.273303,

0.287274, 0.26159, 0.246528, 0.217804, 0.200525, 0.220677,

0.233855, 0.260937, 0.256393, 0.267537, 0.252475, 0.284507,

0.281014, 0.279515, 0.246243, 0.270379, 0.286201, 0.256058,

0.264012, 0.276209, 0.254487, 0.289291, 0.26501, 0.274288,

0.253071, 0.268192, 0.300214, 0.280448, 0.286127, 0.261134,

0.277688, 0.276613, 0.29239, 0.281473, 0.268143, 0.277618,

0.278949, 0.265326, 0.292102, 0.279343, 0.264461, 0.284835,

0.286315, 0.287732, 0.290766, 0.296421, 0.291071, 0.291936,

0.280191, 0.305065, 0.27244, 0.271393, 0.284126, 0.275812,

0.23777, 0.226512, 0.252025, 0.238851, 0.248671, 0.267721,

0.294617, 0.291678, 0.289626}, {0.450434, 0.441146, 0.430905,

0.436235, 0.415675, 0.422205, 0.397473, 0.392151, 0.376597,

0.366427, 0.368128, 0.372967, 0.372593, 0.353326, 0.352644,

0.341321, 0.336053, 0.320796, 0.327306, 0.288979, 0.308864,

0.28104, 0.286381, 0.262804, 0.275725, 0.259161, 0.215479,

0.244009, 0.219167, 0.219986, 0.164715, 0.213558, 0.178171,

0.140195, 0.170384, 0.12916, 0.150066, 0.164757, 0.189708,

0.140224, 0.247229, 0.266543, 0.260418, 0.221265, 0.239668,

0.28554, 0.20785, 0.245946, 0.22701, 0.270067, 0.187391,

0.272877, 0.227781, 0.248532, 0.273303, 0.287274, 0.26159,

0.246528, 0.217804, 0.200525, 0.220677, 0.233855, 0.260937,

0.256393, 0.267537, 0.252475, 0.284507, 0.281014, 0.279515,

0.246243, 0.270379, 0.286201, 0.256058, 0.264012, 0.276209,

0.254487, 0.289291, 0.26501, 0.274288, 0.253071, 0.268192,

0.300214, 0.280448, 0.286127, 0.261134, 0.277688, 0.276613,

0.29239, 0.281473, 0.268143, 0.277618, 0.278949, 0.265326,

0.292102, 0.279343, 0.264461, 0.284835, 0.286315, 0.287732,

0.290766, 0.296421, 0.291071, 0.291936, 0.280191, 0.305065,

0.27244, 0.271393, 0.284126, 0.275812, 0.23777, 0.226512,

0.252025, 0.238851, 0.248671, 0.267721, 0.294617, 0.291678,

0.289626}, {0.450434, 0.441146, 0.430905, 0.436235, 0.415675,

0.422205, 0.397473, 0.392151, 0.376597, 0.366427, 0.368128,

0.372967, 0.372593, 0.353326, 0.352644, 0.341321, 0.336053,

0.320796, 0.327306, 0.288979, 0.308864, 0.28104, 0.286381,

0.262804, 0.275725, 0.259161, 0.215479, 0.244009, 0.219167,

0.219986, 0.164715, 0.213558, 0.178171, 0.140195, 0.170384,

0.12916, 0.150066, 0.164757, 0.189708, 0.140224, 0.247229,

0.266543, 0.260418, 0.221265, 0.239668, 0.28554, 0.20785,

0.245946, 0.22701, 0.270067, 0.187391, 0.272877, 0.227781,

0.248532, 0.273303, 0.287274, 0.26159, 0.246528, 0.217804,

0.200525, 0.220677, 0.233855, 0.260937, 0.256393, 0.267537,

0.252475, 0.284507, 0.281014, 0.279515, 0.246243, 0.270379,

0.286201, 0.256058, 0.264012, 0.276209, 0.254487, 0.289291,

0.26501, 0.274288, 0.253071, 0.268192, 0.300214, 0.280448,

0.286127, 0.261134, 0.277688, 0.276613, 0.29239, 0.281473,

0.268143, 0.277618, 0.278949, 0.265326, 0.292102, 0.279343,

0.264461, 0.284835, 0.286315, 0.287732, 0.290766, 0.296421,

0.291071, 0.291936, 0.280191, 0.305065, 0.27244, 0.271393,

0.284126, 0.275812, 0.23777, 0.226512, 0.252025, 0.238851,

0.248671, 0.267721, 0.294617, 0.291678, 0.289626}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,

0.189246, 0.150104, 0.180982, 0.152796, 0.0965253, 0.120748,

0.187443, 0.160231, 0.165157, 0.143546, 0.155725, 0.117646,

0.125036, 0.126772, 0.135444, 0.141762, 0.170345, 0.114505,

0.116832, 0.16271, 0.174504, 0.163404, 0.152664, 0.182086,

0.121941, 0.141057, 0.174604, 0.131218, 0.140484, 0.188917,

0.127376, 0.16991, 0.156496, 0.119469, 0.164727, 0.113953,

0.181733, 0.200938, 0.210693, 0.170044, 0.192697, 0.147419,

0.202921, 0.208256, 0.216734, 0.200781, 0.177135, 0.177109,

0.139037, 0.162681, 0.0728616, 0.151225, 0.186282, 0.158087,

0.121905, 0.183381, 0.178484, 0.187736, 0.151122, 0.178234,

0.138905, 0.156034, 0.170861, 0.146859, 0.158404, 0.168949,

0.188883, 0.153032, 0.196801, 0.0506713, 0.176193, 0.166891,

0.155927, 0.136664, 0.177733, 0.142018, 0.169596, 0.108443,

0.159616, 0.126281, 0.130765, 0.101702, 0.175138, 0.163148,

0.164344, 0.169921, 0.154804, 0.157788, 0.128264, 0.10807,

0.137504, 0.168891, 0.13098, 0.125518, 0.15939, 0.110838,

0.158217, 0.165841, 0.163301, 0.140466, 0.147523}, {0.119771,

0.150876, 0.147637, 0.181649, 0.143006, 0.109381, 0.136185,

0.194252, 0.191829, 0.124318, 0.149028, 0.149428, 0.0902791,

0.174586, 0.144796, 0.120407, 0.165507, 0.189246, 0.150104,

0.180982, 0.152796, 0.0965253, 0.120748, 0.187443, 0.160231,

0.165157, 0.143546, 0.155725, 0.117646, 0.125036, 0.126772,

0.135444, 0.141762, 0.170345, 0.114505, 0.116832, 0.16271,

0.174504, 0.163404, 0.152664, 0.182086, 0.121941, 0.141057,

0.174604, 0.131218, 0.140484, 0.188917, 0.127376, 0.16991,

0.156496, 0.119469, 0.164727, 0.113953, 0.181733, 0.200938,

0.210693, 0.170044, 0.192697, 0.147419, 0.202921, 0.208256,

0.216734, 0.200781, 0.177135, 0.177109, 0.139037, 0.162681,

0.0728616, 0.151225, 0.186282, 0.158087, 0.121905, 0.183381,

0.178484, 0.187736, 0.151122, 0.178234, 0.138905, 0.156034,

0.170861, 0.146859, 0.158404, 0.168949, 0.188883, 0.153032,

0.196801, 0.0506713, 0.176193, 0.166891, 0.155927, 0.136664,

0.177733, 0.142018, 0.169596, 0.108443, 0.159616, 0.126281,

0.130765, 0.101702, 0.175138, 0.163148, 0.164344, 0.169921,

0.154804, 0.157788, 0.128264, 0.10807, 0.137504, 0.168891,

0.13098, 0.125518, 0.15939, 0.110838, 0.158217, 0.165841,

0.163301, 0.140466, 0.147523}, {0.119771, 0.150876, 0.147637,

0.181649, 0.143006, 0.109381, 0.136185, 0.194252, 0.191829,

0.124318, 0.149028, 0.149428, 0.0902791, 0.174586, 0.144796,

0.120407, 0.165507, 0.189246, 0.150104, 0.180982, 0.152796,

0.0965253, 0.120748, 0.187443, 0.160231, 0.165157, 0.143546,

0.155725, 0.117646, 0.125036, 0.126772, 0.135444, 0.141762,

0.170345, 0.114505, 0.116832, 0.16271, 0.174504, 0.163404,

0.152664, 0.182086, 0.121941, 0.141057, 0.174604, 0.131218,

0.140484, 0.188917, 0.127376, 0.16991, 0.156496, 0.119469,

0.164727, 0.113953, 0.181733, 0.200938, 0.210693, 0.170044,

0.192697, 0.147419, 0.202921, 0.208256, 0.216734, 0.200781,

0.177135, 0.177109, 0.139037, 0.162681, 0.0728616, 0.151225,

0.186282, 0.158087, 0.121905, 0.183381, 0.178484, 0.187736,

0.151122, 0.178234, 0.138905, 0.156034, 0.170861, 0.146859,

0.158404, 0.168949, 0.188883, 0.153032, 0.196801, 0.0506713,

0.176193, 0.166891, 0.155927, 0.136664, 0.177733, 0.142018,

0.169596, 0.108443, 0.159616, 0.126281, 0.130765, 0.101702,

0.175138, 0.163148, 0.164344, 0.169921, 0.154804, 0.157788,

0.128264, 0.10807, 0.137504, 0.168891, 0.13098, 0.125518,

0.15939, 0.110838, 0.158217, 0.165841, 0.163301, 0.140466,

0.147523}, {0.119771, 0.150876, 0.147637, 0.181649, 0.143006,

0.109381, 0.136185, 0.194252, 0.191829, 0.124318, 0.149028,

0.149428, 0.0902791, 0.174586, 0.144796, 0.120407, 0.165507,